Target audience: Expert

Estimated reading time: 8'

Newsletter: Geometric Learning in Python

Are you frustrated with the linear assumptions of Principal Component Analysis (PCA) or losing the global structure and relationships in your data with t-SNE?

Leveraging Riemannian geometry, Uniform Manifold Approximation and Projection (UMAP) might be the solution you're looking for.

What you will learn: How to leverage to Uniform Manifold Approximation and Projection method as a reliable non-linear dimensionality reduction technique that preserve the global distribution over low dimension space (Manifold).

The complete article, featuring design principles, detailed implementation, in-depth analysis, and exercises, is available in Dive into Uniform Manifold Approximation & Projection

Notes:

- Environments: Python 3.11, SciKit-learn 1.5.1, Matplotlib 3.9.1, umap-learn 0.5.6

- Source code is available at Github.com/patnicolas/Data_Exploration/umap

- To enhance the readability of the algorithm implementations, we have omitted non-essential code elements like error checking, comments, exceptions, validation of class and method arguments, scoping qualifiers, and import statements.

Introduction

Dimension reduction algorithms can be classified into two categories:

- Models that maintain the global pairwise distance structure among all data samples, such as Principal Component Analysis (PCA) and Multidimensional Scaling (MDS).

- Models that preserve local distances, including t-distributed Stochastic Neighbor Embedding (t-SNE) and Laplacian Eigenmaps.

Uniform Manifold Approximation and Projection (UMAP) leverages research done for Laplacian eigenmaps to tackle the problem of uniform data distributions on manifolds by combining Riemannian geometry with advances in fuzzy simplicial sets [ref 1].

t-SNE is considered the leading method for dimension reduction in visualization. However, compared to t-SNE, UMAP maintains more of the global structure and offers faster run time performance.

t-SNE

t-SNE is a widely used technique for reducing the dimensionality of data and enhancing its visualization. It's often compared to PCA (Principal Component Analysis), another popular method. This article examines the performance of UMAP and t-SNE using two well-known datasets, Iris and MNIST.

t-SNE is an unsupervised, non-linear technique designed for exploring and visualizing high-dimensional data [ref 2]. It provides insights into how data is structured in higher dimensions by projecting it into two or three dimensions. This capability helps reveal underlying patterns and relationships, making it a valuable tool for understanding complex datasets.

UMAP

Benefits

- Ease of Use: Designed to be easy to use, with a simple API for fitting and transforming data.

- Scalability: Can handle large datasets efficiently.

- Flexibility: Works with a variety of data types and supports both supervised and unsupervised learning.

- Integration: Integrates with other Python scientific computing libraries in Python, such as Numpy, PyTorch and Scikit-learn.

The algorithm behind UMAP involves:

- Constructing a high-dimensional graph representation of the data.

- Capturing global relationships within the data.

- Encoding these relationships using a simplicial set.

- Applying stochastic gradient descent (SGD) to optimize the data's representation in a lower dimension, akin to the process used in autoencoders.

This method allows UMAP to maintain both local and global data structures, making it effective for various data analysis and visualization tasks.

For the mathematically inclined, the simplicial set captures the local and global relationships between two points xi and xj as: \[sim(x_{i}, x_{j})=e^{ - \frac{||x_{i}-x_{j}|| ^{2}}{\sigma_{i} \sigma_{j}}}\]with sigma parameters as scaler.

The cost function for all the points xi with their representation yi is \[L=\sum_{i=1}^{n}\sum_{j=1}^{n}\left ( sim(x_{i}, x_{j}) - sim(y_{i}, y_{j}) \right )^2\]

Python module is installed through the usual pip utility.

pip install umap-learn

The reader is invited to consult the documentation [ref 3].

Datasets

Let's consider the two datasets used for this evaluation:

- MNIST: The MNIST database consists of 60,000 training examples and 10,000 test examples of handwritten digits. This dataset was originally created by Yann LeCun [ref 4].

- IRIS: This dataset includes 150 instances, divided into three classes of 50 instances each, with each class representing a different species of iris plant [ref 5].

class DataSrc(Enum):

MNIST = 'mnist'

IRIS = 'iris'

First, we implement a data set loader for these two data sets using sklearn datasets module.

from sklearn.datasets import load_digits, load_iris

class DatasetLoader(object):

def __init__(self, dataset_src: DataSrc) -> None:

match dataset_src:

case DataSrc.MNIST:

digits = load_digits()

self.data = digits.data

self.color = digits.target.astype(int)

case DataSrc.IRIS:

images = load_iris()

self.data = images.data

self.names = images.target_names

self.color = images.target.astype(int)

self.dataset_src = dataset_src

t-SNE

Evaluation code

Let's wrap the evaluation of t-SNE algorithm for IRIS and MNIST data in a class TSneEval. The constructor initializes the Scikit-learn class TSNE with the appropriate number of components (dimension 2 or 3). The class TSneEval inherits for sklearn data loaders by subclassing DatasetLoader.

class TSneEval(DatasetLoader): def __init__(self, data_src: DataSrc, n_components: int) -> None: super(TSneEval, self).__init__(data_src)

# Instantiate the Sklearn t-SNE model

self.t_sne = TSNE(n_components=n_components)

The evaluation method, __call__, utilizes the Matplotlib library to visualize data clusters. It generates plots to display the clusters for each digit in the MNIST dataset and for each flower category in the Iris dataset.

def __call__(self, cmap: AnyStr) -> NoReturn:

import matplotlib.pyplot as plt

embedding = self.t_sne.fit_transform(self.data)

x = embedding[:, 0]

y = embedding[:, 1]

n_ticks = 10

plt.scatter(x=x, y=y, c=self.color, cmap=cmap, s=4.0)

plt.colorbar( boundaries=np.arange(n_ticks+1) - 0.5).set_ticks(np.arange(n_ticks) )

plt.title(f'UMAP {self.dataset_src} {self.umap.n_neighbors} neighbors' f'min_dist: {self.umap.min_dist}')

plt.show()

Output

The simple previous code snippet produces the t-SNE plots for MNIST and IRIS data sets.

MNIST dataset

First let's look at the 10 data clusters (1 per digit) in a two and 3 dimension plot.

IRIS dataset

The data related to each of the 3 types or Iris flowers are represented in 2 and 3 dimension plots

UMAP

Evaluation

We follow the same procedure for UMAP visualization as we do for t-SNE. The constructor of the UMAPEval wrapper class initializes the UMAP model with the specified number of neighbors (n_neighbors) and minimum distance (min_dist) parameters, which are used to represent the data.

import umap

class UMAPEval(DatasetLoader):

def __init__(self, dataset_src: DataSrc, n_neighbors: int, min_dist: float) -> None:

super(UMAPEval, self).__init__(dataset_src) # Instantiate the UMAP model self.umap = umap.UMAP(random_state=42,

n_neighbors=n_neighbors, min_dist=min_dist)

Similar to the t-SNE evaluation, the __call__ method uses the Matplotlib library to visualize data clusters.

def __call__(self, cmap: AnyStr) -> NoReturn:

embedding = self.umap.fit_transform(self.data)

plt.scatter(

x =embedding[:, 0],

y =embedding[:, 1],

c =self.color,

cmap =cmap,

s=4.0)

....... // Similar code as t-SNE

plt.show()

Output

MNIST dataset

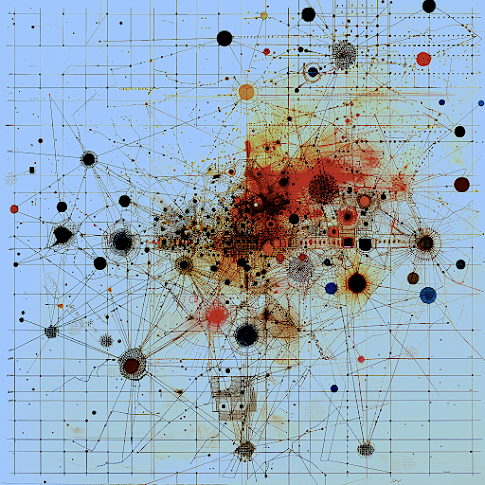

Fig. 5 UMAP plot for MNIST data with 8 neighbors and min distance 0.3

Fig. 6 UMAP plot for MNIST data with 8 neighbors and min distance 0.6

Fig. 7 UMAP plot for MNIST data with 4 neighbors and min distance 0.8

Tuning

Number of neighbors

The parameter that defines the number of neighbors in UMAP, n_neighbors, determines the balance between global and local distances in the data visualization. A smaller number of neighbors means the local neighborhood is defined by fewer data points, which is ideal for detecting small clusters and capturing fine details. On the other hand, a larger number of neighbors helps in capturing broader, global patterns, but it may oversimplify the local relationships, potentially missing finer details in the data.

min_dist (compactness in low dimension)

This parameter plays a crucial role in determining the appearance of the low-dimensional representation, specifically affecting the clustering and spacing of data points.

A small min_dist value allows UMAP to pack points closer together in the low-dimensional space. It emphasizes the preservation of local structure and can make clusters more distinct.

A larger min_dist value enforces a minimum separation between points in the low-dimensional space. UMAP visualizations with a large min_dist may show less clustering and more continuous distributions of data points.

The choice of min_dist thus influences the trade-off between preserving local versus global structures.