Target audience: Intermediate

Estimated reading time: 4'

“There is peace even in the storm.” Vincent Van Gogh

Certain challenges in our everyday life demand immediate attention, with severe storms and tornadoes being prime examples. This article demonstrates the integration of Kafka event queues with Spark's distributed structured streaming, crafting a powerful and responsive system for predicting potentially life-threatening weather events.

Apache Spark

Spark structured streaming

Use case

Overview

Data streaming pipeline

Implementation

Weather data sources

Weather tracking pipeline

References

Spark structured streaming

Use case

Overview

Data streaming pipeline

Implementation

Weather data sources

Weather tracking pipeline

References

Important notes:

- Software versions utilized in the source code include Scala 2.12.15, Spark 3.4.0, and Kafka 3.4.0.

- This article focuses on the data streaming pipeline. Explaining the storm prediction model falls outside the article's range.

- You can find the source code on the Github-Streaming library [ref 1]

- Note that error messages and the validation of method arguments are not included in the provided code examples.

Streaming frameworks

The goal is to develop a service that warns local authorities about severe storms or tornadoes. Given the urgency of analyzing data and suggesting actions, our alert system is designed as a streaming data flow.

The main elements include:

- A Kafka message queue, where each monitoring device type is allocated a specific topic, enabling the routing of different alerts to the relevant agency.

- The Spark streaming framework to handle weather data processing and to disseminate forecasts of serious weather disruptions.

- A predictive model specifically for severe storms and tornadoes.

Apache Kafka

Apache Kafka is an event streaming platform enabling:

- Publishing (writing) and subscribing (reading) to event streams, as well as continuously importing/exporting data from various systems.

- Durable and reliable storage of event streams for any desired duration.

- Real-time or retrospective processing of event streams.

You can start and stop the Kafka service using shell scripts in the following way:

zookeeper-server-stop

kafka-server-stop

sleep 2

zookeeper-server-start $KAFKA_ROOT/kafka/config/zookeeper.properties &

sleep 1

ps -ef | grep zookeeper

kafka-server-start $KAFKA_ROOT/kafka/config/server.properties &

sleep 1

ps -ef | grep kafka

For testing purpose, we deploy Apache Kafka on a local host listening to the default port 9092. Here are some useful commands

To list existing topic

kafka-topics --bootstrap-server localhost:9092 --list

To create a new topic (i.e. doppler)

kafka-topics

--bootstrap-server localhost:9092

--topic doppler

--create

--replication-factor 1

--partitions 2

To list messages or event current queued for a given topic (i.e., weather)

kafka-console-consumer

--topic weather

--from-beginning

--bootstrap-server localhost:9092

Here is an example of libraries required to build a Kafka consumer and producer application.

<scala.version>2.12.15</scala.version>

<kafka.version>3.4.0</kafka.version>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-streams</artifactId>

<version>${kafka.version}</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-streams-scala_2.12</artifactId>

<version>${kafka.version}</version>

</dependency>

Apache Spark

Apache Spark is a free, open-source framework for cluster computing, specifically designed to process data in real time via distributed computing. Its primary applications include:

- Analytics: Spark's capability to quickly produce responses allows for interactive data handling, rather than relying solely on predefined queries.

- Data Integration: Often, the data from various systems is inconsistent and cannot be combined for analysis directly. To obtain consistent data, processes like Extract, Transform, and Load (ETL) are employed. Spark streamlines this ETL process, making it more cost-effective and time-efficient.

- Streaming: Managing real-time data, such as log files, is challenging. Spark excels in processing these data streams and can identify and block potentially fraudulent activities.

- Machine Learning: The growing volume of data has made machine learning techniques more viable and accurate. Spark's ability to store data in memory and execute repeated queries swiftly facilitates the use of machine learning algorithms.

Here is an example of libraries required to build a Spark application:

<spark.version>3.3.2</spark.version>

<scala.version>2.12.15</scala.version>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.12</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka-0-10_2.12</artifactId>

<version>${spark.version}</version>

</dependency>

Spark structured streaming

Spark Structured Streaming, built atop Spark SQL, is a streaming data engine. It processes data in increments, continually updating outcomes as additional streaming data is received [ref 4]. A Spark Streaming application consists of three primary parts: the source (input), the processing engine (business logic), and the sink (output).

For comprehensive details on setting up, applying, and deploying the Spark Structured Streaming library, visit Boost real-time processing with Spark Structured Streaming.

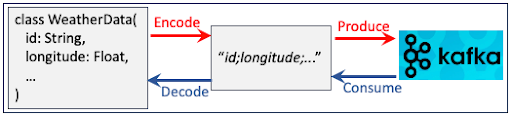

In our specific scenario, data input is managed via Kafka consumers, and data output is handled through Kafka producers [ref 5].

Use case

Overview

This use case involves gathering data from weather stations and Doppler radars, then merging these data sources based on location and time stamps. After consolidation, the unified data is sent to a model that forecasts potentially hazardous storms or tornadoes. The resulting predictions are then relayed back to the relevant authorities (such as emergency personnel, newsrooms, law enforcement, etc.) through Kafka.

The two sources of data are:

| Weather station to collect temperature, pressure, humidity | |

| Doppler radar for advanced data regarding wind intensity and direction |

In practice, meteorologists receive overlapping data features from weather stations and Doppler radars, which they then reconcile and normalize. However, in our streamlined use case, we choose to omit these overlapping features.

The process for gathering data from weather data tracking devices is not covered in this context, as it is not pertinent to the streaming pipeline.

Data streaming pipeline

The monitoring streaming pipeline is structured into three phases:

- Kafka queue.

- Spark's distributed structured streams.

- A variety of storm and tornado prediction models, developed using the PyTorch library and accessible via REST API.

As noted in the introductory section, the PyTorch-based model is outside this post's focus and is mentioned here only for context.

Storm tracking streaming pipeline

Data gathered from weather stations and Doppler radars is fed into the Spark engine, where both streams are combined and harmonized based on location and timestamp. This unified dataset is then employed for training the model. During the inference phase, predictions are streamed back to Kafka.

Adding an intriguing aspect to the implementation, both weather and Doppler data are ingested from Kafka as batch queries, and the storm forecasts are subsequently streamed back to Kafka.

Implementation

Weather data sources

Weather tracking device

Initially, we need to establish the fundamental characteristics of weather tracking data points: location (longitude and latitude) and time stamp. These are crucial for correlating and synchronizing data from different devices. The details on how these data points are synchronized will be explained in the section Streaming processing: Data aggregation.

trait TrackingData[T <: TrackingData[T]] {

self =>

val id: String // Identifier for the weather tracking device

val longitude: Float // Longitude for the weather tracking device

val latitude: Float // Latitude for the weather tracking device

val timeStamp: String // Time stamp data is collected

}

Weather station

We collect temperature, pressure and humidity parameters from weather stations given their location (longitude, latitude) and time interval (timeStamp). Therefore a weather station record, WeatherData inherits tracking attributes from TrackingData.

case class WeatherData (

override val id: String, // Identifier for the weather station

override val longitude: Float, // Longitude for the weather station

override val latitude: Float, // Latitude for the weather station

override val timeStamp: String = System.currentTimeMillis().toString, // Time stamp data is collected

temperature: Float, // Temperature (Fahrenheit) collected at timeStamp

pressure: Float, // Pressure (millibars) collected at timeStamp

humidity: Float) extends TrackingData[WeatherData] { // Humidity (%) collected at timeStamp

// Random generator for testing

def rand(rand: Random, alpha: Float): WeatherData = this.copy(

timeStamp = (timeStamp.toLong + 10000L + rand.nextInt(2000)).toString,

temperature = temperature*(1 + alpha*rand.nextFloat()),

pressure = pressure*(1 + alpha*rand.nextFloat()),

humidity = humidity*(1 + alpha*rand.nextFloat())

)

// Encoded weather station data produced to Kafka queue

override def toString: String =

s"$id;$longitude;$latitude;$timeStamp;$temperature;$pressure;$humidity"

}

We generate randomly values of some attributes for testing purpose using the formula \[x(1 + \alpha r_{[0,1]})\] with alpha ~ 0.1 and r be a uniform distribution between 0 and 1.

The string representation with ; delimiter, is used to serialize the tracking data for Kafka.

Doppler radar

We retrieve wind-related measurements (such as wind shear, average wind speed, gust speed, and direction) from the Doppler radar for specific locations and time intervals. Similar to the weather station, the 'DopplerData' class is a sub-class of the 'TrackingData' trait.

case class DopplerData(

override val id: String, // Identifier for the Doppler radar

override val longitude: Float, // Longitude for the Doppler radar

override val latitude: Float, // Latitude for the doppler radar

override val timeStamp: String, // Time stamp data is collected

windShear: Boolean, // Is it a wind shear?

windSpeed: Float, // Average wind speed

gustSpeed: Float, // Maximum wind speed

windDirection: Int) extends TrackingData[DopplerData] {

// Random generator

def rand(rand: Random, alpha: Float): DopplerData = this.copy(

timeStamp = (timeStamp.toLong + 10000L + rand.nextInt(2000)).toString,

windShear = rand.nextBoolean(),

windSpeed = windSpeed * (1 + alpha * rand.nextFloat()),

gustSpeed = gustSpeed * (1 + alpha * rand.nextFloat()),

windDirection = {

val newDirection = windDirection * (1 + alpha * rand.nextFloat())

if(newDirection > 360.0) newDirection.toInt%360 else newDirection.toInt

}

)

// Encoded Doppler radar data produced to Kafka

override def toString: String = s"$id;$longitude;$latitude;$timeStamp;$windShear;$windSpeed;" +

s"$gustSpeed;$windDirection"

}

Weather tracking streaming pipeline

Streaming tasks

To enhance understanding, we simplify and segment the processing engine (business logic) into two parts: the aggregation of input data and the actual model prediction.

The streaming pipeline comprises four key tasks:

- Source: Retrieves data from weather stations and Doppler radars via Kafka queues.

- Aggregation: Synchronizes and consolidates the data.

- Storm Prediction: Activates the model to provide potential storm advisories, communicated through a REST API.

- Sink: Distributes storm advisories to the relevant Kafka topics.

class WeatherTracking(

inputTopics: Seq[String],

outputTopics: Seq[String],

model: Dataset[ModelInputData] => Seq[WeatherAlert])(implicit sparkSession: SparkSession) {

def execute(): Unit =

for {

(weatherDS, dopplerDS) <- source // Step 1

consolidatedDataset <- synchronizeSources(weatherDS, dopplerDS) // Step 2

predictions <- predict(consolidatedDataset) // Step 3

consolidatedDataset <- sink(predictions) // Step 3

} yield { consolidatedDataset }

In every computational task within the streaming pipeline, exceptions are transformed into options. These are then serialized using the Scala for-comprehension statement.

Streaming source

The data is consumed as a data frame df, with key and value defines as string (1).

The key for the source is designated as W_${weather station id} for weather data, and D_{Doppler radar id} for Doppler data. The value consists of the encoded tracking data (toString) (2), which is then sorted by data sources (3). Subsequently, the weather tracking data is decoded into instances of Weather and Doppler radar data (4).

def source: Option[(Dataset[WeatherData], Dataset[DopplerData])] = try {

import sparkSession.implicits._

val df = sparkSession.read.format("kafka")

.option("kafka.bootstrap.servers", "localhost:9092")

.option("subscribe", inputTopics.mkString(",")) .option("max.poll.interval.ms", 2800) .option("fetch.max.bytes", 32768)

val ds = df.selectExpr("CAST(key AS STRING)", "CAST(value AS STRING)") .as[(String, String)]. // (2)

\// Convert weather data stream into objects

val weatherDataDS = ds .filter(_._1.head == 'W') // (3)

.map{ case (_, value) => WeatherDataEncoder.decode(value) } // (4)

// Convert Doppler radar data stream into objects

val dopplerDataDS = ds.filter(_._1.head == 'D') // (3)

.map { case (_, value) => DopplerDataEncoder.decode(value) } // (4)

Some((weatherDataDS, dopplerDataDS))

} catch { case e: Exception => None }

The batching source's implementation for batch queries may include a range of Kafka configuration parameters for consumers, like max.partition.fetch.bytes, connections.max.idle.ms, fetch.max.bytes, or max.poll.interval.ms [ref 6].

Meanwhile, the decode method transforms a semicolon-delimited string into an instance of either WeatherData or DopplerData.

The method readStream would be used for data streamed consumed from Kafka.

Streaming processing: Data aggregation

The goal is to align data from weather stations and Doppler radar within a time frame of approximately ±20 seconds around the same timestamp. This timestamp is categorized in intervals of 20 seconds.

def synchronizeSources(

weatherDS: Dataset[WeatherData],

dopplerDS: Dataset[DopplerData]): Option[Dataset[ModelInputData]] = try {

val timedBucketedWeatherDS = weatherDS.map(

wData =>wData.copy(timeStamp = bucketKey(wData.timeStamp))

)

val timedBucketedDopplerDS = dopplerDS.map(

wData => wData.copy(timeStamp = bucketKey(wData.timeStamp))

)

// Performed a fast, presorted join

val output = sortingJoin[WeatherData, DopplerData](

timedBucketedWeatherDS,

tDSKey = "timeStamp",

timedBucketedDopplerDS,

uDSKey = "timeStamp"

).map {

case (weatherData, dopplerData) =>

ModelInputData(

weatherData.timeStamp,

weatherData.temperature,

weatherData.pressure,

weatherData.humidity,

dopplerData.windShear,

dopplerData.windSpeed,

dopplerData.gustSpeed,

dopplerData.windDirection

)

}

Some(output)

} catch { case e: Exception => None }

The sortingJoin is a parameterized method that pre-sort data in each partition prior to joining the two datasets. The implementation is available at Github - Spark fast join implementation.

The data class for the input to the model in described in the appendix.

Streaming sink

Finally, the sink encodes the weather alert generated by the storm predictor. Contrary to the streaming source, the data is produced to Kafka topic as a data stream.

def sink(stormPredictions: Dataset[StormPrediction]): Option[Dataset[String]] = try {

// Encode dataset of weather alerts to be produced to Kafka topic

val encStormPredictions = stormPredictions.map(_.toString)

encStormPredictions

.selectExpr("CAST(key AS STRING)", "CAST(value AS STRING)")

.writeStream

.format("kafka")

.option("kafka.bootstrap.servers", "localhost:9092")

.option("subscribe", outputTopics.mkString(",")

).start()

Some(encStormPredictions)

} catch { case e: Exception => None }

The implementation of the sink can list various Kafka configuration parameters for producer such as buffer.memory, retries, batch.size, max.request.size or linger.ms [ref 7]. The method write should be used in the case the data is to be produced in batch to Kafka.

Thank you for reading this article. For more information ...

References

[2] Apache Kafka

Appendix

Storm prediction input

case class ModelInputData(

timeStamp: String, // Time stamp for the new consolidated data, input to model

temperature: Float, // Temperature in Fahrenheit

pressure: Float, // Barometric pressure in millibars

humidity: Float, // Humidity in percentage

windShear: Boolean, // Boolean flag to specify if this is a wind shear

windSpeed: Float, // Average speed for the wind (miles/hour)

gustSpeed: Float, // Maximum speed for the wind (miles/hour)

windDirection: Float // Direction of the wind [0, 360] degrees

)

Storm prediction output

case class StormPrediction(

id: String, // Identifier of the alert/message

intensity: Int, // Intensity of storm or Tornado [1, to 5]

probability: Float, // Probability of a storm of a given intensity develop

timeStamp: String, // Time stamp of the alert or prediction

modelInputData: ModelInputData,// Weather and Doppler radar data used to generate/predict the alert

cellArea: CellArea // Area covered by the alert/prediction)---------------------------

Patrick Nicolas has over 25 years of experience in software and data engineering, architecture design and end-to-end deployment and support with extensive knowledge in machine learning.

He has been director of data engineering at Aideo Technologies since 2017 and he is the author of "Scala for Machine Learning" Packt Publishing ISBN 978-1-78712-238-3

He has been director of data engineering at Aideo Technologies since 2017 and he is the author of "Scala for Machine Learning" Packt Publishing ISBN 978-1-78712-238-3

No comments:

Post a Comment

Note: Only a member of this blog may post a comment.